I still remember my sysadmin’s face whenever I asked for a few GBs of RAM for some J2EE app – I guess he wasn’t a big Java fan. Times have changed in nowadays we happen to be offered more RAM than our apps reasonably needed (of course we take what we were offered ;-))

On the desktop site users are a bit more concerned about memory consumption and thus the JVM hasn’t a particular good standing when it comes to the client side. It feels alien to developers and users to specifiy a maximum memory size (-Xmx) and obscene if the JVM doesn’t even return unused memory to the OS. But the latter behavior is subject to the garbage collector in use. We’ll take a look at the behaviour of the garbage collectors of JDK 7. If you don’t know what garbage collector for JDK 7 exist then you might consider reading those references first:

Java HotSpot Garbage Collection

Understanding GC pauses in JVM, HotSpot’s minor GC.

The test class is very simple and short enough to be pasted here. It first allocates a number of int array and later releases the references and invokes the garbage collector.

public class GCTest {

private static ArrayList data = new ArrayList<>();

public static void main(String[] args) throws Exception {

for (int i=0;i<700;i++) {

data.add(new int[1 * 1024 * 1024]);

Thread.sleep(50);

System.out.println("Allocation # "+i);

}

for (int i=0;i<700;i++) {

data.remove(data.size()-1);

if (i%10 == 0) {

System.out.println("(Trying to) run garbage collection");

System.gc();

}

Thread.sleep(50);

System.out.println("Deallocation # "+i);

}

}

}

Now we’ll take a look at the different garbage collectors available with JDK 7.

Parallel Scavenge (PS MarkSweep + PS Scavenge)

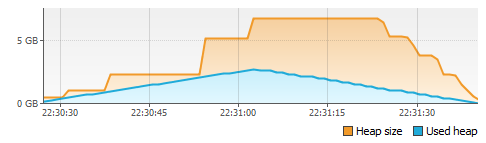

This is the default collector on my machine (Windows 7, 64 Bit, JDK 7 64 Bit). It’s a parallel throughput collector and can be selected by passing “-XX:+UseParallelOldGC”. This collector appears not to return any memory to the OS. Here’s the heap graph from VisualVM. The blue line shows the amount of heap used by the test program. The orange line shows how much memory the JVM has allocated from the OS for the heap. Finding out the “real” memory consumption is a science on its own, but for the sake of simplicity we’ll take the working set as displayed by the Process Explorer as the memory usage.

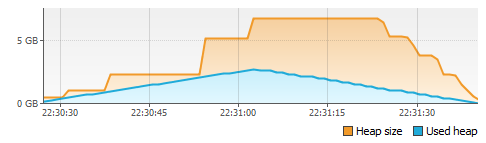

CMS – Concurrent Mark And Sweep (ConcurrentMarkSweep + ParNew)

Turn it on with “-XX:+UseConcMarkSweepGC”. CMS is a low pause collector. It also refused to return any memory.

Serial GC (Copy + MarkSweepCompact)

You switch it on with “-XX:+UseSerialGC”. The serial GC doesn’t sound very sexy in times of multicore hardware, but it’s the first GC to release memory to the OS. Nice start!

Parallel New + Serial GC (ParNew + MarkSweepCompact)

The serial collector for the old generation can be spiced up with a parallel collector for the new generation. Use “-XX:+UseParNewGC” for that combination. The nice thing is it keeps the nice behaviour of Serial GC and returns memory to the OS.

If you use the Serial GC you can even influence the resizing behavior with the MinHeapFreeRatio and MaxHeapFreeRatio VM arguments. Serial GC appears to be the only GC that actually respects that arguments. Here’s an example with “-XX:+UseParNewGC -XX:MinHeapFreeRatio=5 -XX:MaxHeapFreeRatio=10”.

This results in a very nice and close relation between used heap and allocated heap size. But of course you must consider the additional costs for adjusting the heap size often.

G1 (G1 Old Generation, G1 Young Generation)

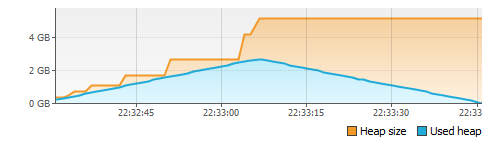

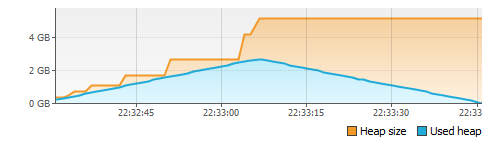

The newest garbage collector can be turned on with “-XX:+UseG1GC”. It works quite different from all other collectors and as far as I know is intended to replace the CMS collector. It has the very nice property that it also returns free memory! The not nearly linear blue line might be due to G1’s only recent support in VisualVM.

Conclusion

Only the Serial GC and G1 release unused memory to the OS. I’m pretty happy to see that the new G1 does behave better that its predecessors. If memory consumption matters for your application and your app has memory peaks you might consider one of those collectors.

I’m interested in hearing your experiences with those GCs.

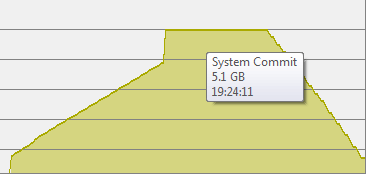

Update: Process Explorer view (in response to Kirk Pepperdine)

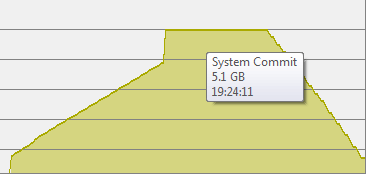

Here’s a graph from process explorer for the G1 test case. If you look at the figures displayed in the process tab you’ll confirm that both the private bytes from virtual memory and the working set are first growing and then shrinking. The numbers are of course higher than the heap size due to perm gen, code cache, non heap memory etc.

And here’s the graph for CMS. As you see all memory is kept by the VM.